Beyond resume polishing

ResumeRavenPro helps job seekers turn role targets, experience, projects, and learning into a clearer career story.

CloudRaven Labs

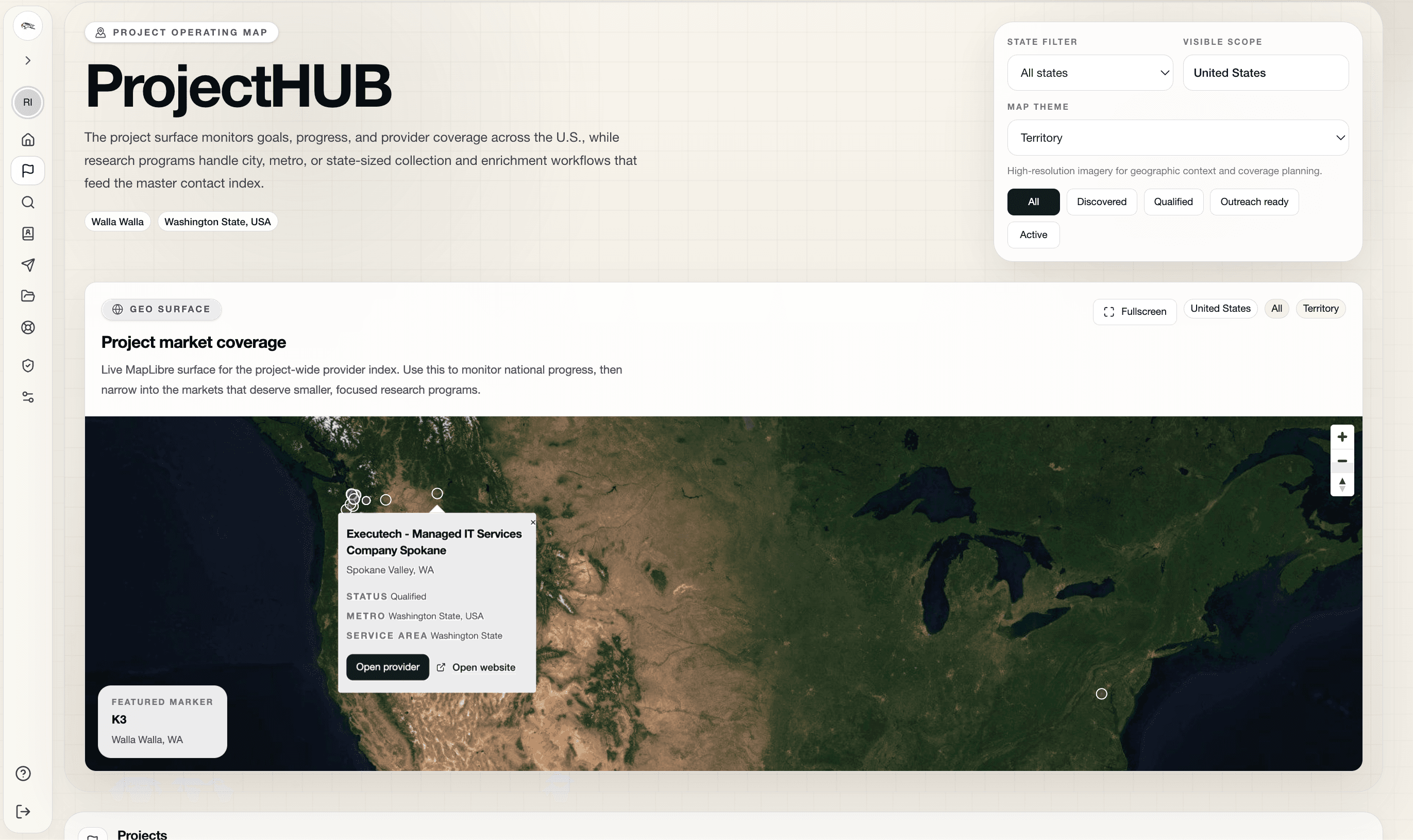

CloudRaven helps teams discover and enrich market segments, run agent-assisted research, preserve evidence through program workflows, and activate qualified leads with more operator control than traditional broad automation provides.

CloudRaven Workspaces actively drive project, program, and research outcomes with targeted personalization and integrations with outreach flows your teams already have in place.

CloudRaven Labs is the company and production systems team behind ResumeRavenPro. The product is relaunching to help job seekers move beyond resume-only workflows and create stronger proof of value through projects, public work, sharper positioning, and guided next steps.

ResumeRavenPro helps job seekers turn role targets, experience, projects, and learning into a clearer career story.

The product helps people identify public artifacts, portfolio work, and proof-of-work projects that support the next role.

Job seekers get a more intentional path across resumes, cover letters, networking, referrals, and next-step planning.

Job-seeker journey

ResumeRavenPro is built around a practical promise: help people create leverage, show value, and take the next meaningful step with more intention.

Clarify the target role and career narrative

Tailor materials around real opportunities

Turn projects and learning into public proof

Plan the next meaningful job-search action

Launch window

Friends-and-family onboarding is open for early job seekers, career supporters, and partner conversations.

Brand relationship

ResumeRavenPro is the product. CloudRaven Labs is the company and workflow systems team behind it.

CloudRaven is strongest when the work needs more than off-the-shelf automation. These offers are built for teams that need market discovery and enrichment, HubSpot-ready activation, agent-assisted research, or a targeted analytics product with real domain quality.

Most engagements start with one messy workflow, one decision chain that matters, and a need to turn scattered data, research, and operator judgment into something usable.

How engagements usually start

Discovery program first

Start by defining the market, enrichment logic, and routing model so the rest of the work has a cleaner operational foundation.

Activation path next

Connect qualified leads, approved messaging, and operator checkpoints so activation into HubSpot happens with context instead of guesswork.

Targeted product launch

Carry the strongest research or workflow pattern into a focused analytics product when the use case is specific enough to warrant its own surface.

Managed Market Discovery

CloudRaven helps teams define the market, enrich accounts, qualify likely fits, and turn fragmented signals into a discovery program that operators can actually run.

Best fit

Best when the market is real but the account map is incomplete, enrichment is inconsistent, or the team needs stronger criteria before it scales outreach or onboarding.

Starts with

A market scan, target-shape review, enrichment pass, and a working definition of how discovery should roll into qualification, routing, and follow-through.

Typical outputs

HubSpot Activation Systems

CloudRaven connects research and enrichment work to lead activation so approved records, drafts, and follow-up workflows can move into HubSpot without losing the context that made them worth contacting.

Best fit

Best when the hard part is not just finding leads, but getting the right ones into HubSpot with enough evidence, review structure, and workflow clarity to support follow-through.

Starts with

A review of the qualification path, field mapping, approval checkpoints, and the exact point where a record or draft should land inside HubSpot.

Typical outputs

Geospatial + Temporal Research

CloudRaven designs research workflows for geospatial and temporal use cases where sources, citations, and domain context matter more than generic model fluency.

Best fit

Best when the work depends on maps, regions, time series, public data, reference data, or synthesis that needs to stay inspectable instead of sounding plausible.

Starts with

A research question review, source plan, and workflow design for how the agent should gather, interpret, and hand findings back to a human reviewer.

Typical outputs

Targeted Analytics Products

CloudRaven helps shape and launch analytics products for tightly defined users, questions, and datasets where quality, trust, and workflow fit matter more than broad but shallow coverage.

Best fit

Best when you have a specific analytics use case, a clear user promise, and a need to reach production with stronger domain quality than general-purpose systems typically provide.

Starts with

A product framing pass around the target user, decision path, data inputs, evidence standard, and the smallest launchable version worth validating.

Typical outputs

ChatGPT-backed OpenClaw access, Symphony orchestration, realtime voice, and longer-running goals all point in the same direction. The hard part is deciding what to delegate first, what the agent may do, and where people stay in control.

First output

Choose one workflow worth delegating

Define tools, limits, and review points

Map the smallest safe prototype path

CloudRaven works best where market discovery, enrichment, activation, research, and product quality all matter at once. If you have a real use case that generic tooling keeps flattening, this is the right place to start.

Good for fragmented markets where account discovery, enrichment, qualification, and routing need a clearer operating model.

Good when the team needs qualified leads, approved drafts, and cleaner handoff into HubSpot without losing context.

Good for place-based, time-based, or public-data work that needs evidence, citations, and a human-review loop.

Good when a focused use case needs stronger quality, trust, and domain fit than broad general models usually provide.

Public inquiry

No account creation required. A short note about the workflow, market, or product is enough to start.

Read how CloudRaven thinks about market discovery, activation systems, agent-assisted research, geospatial public-data workflows, and focused analytics products.

May 18, 2026

ResumeRavenPro is relaunching as a production service from CloudRaven Labs, built to help job seekers move beyond resume polishing and create visible proof of value.

May 2, 2026

OpenClaw shows agents moving closer to where work happens. CloudRaven's Agent Workflow Starter Kit helps teams decide what to delegate, where to set boundaries, and how to keep humans in the loop.

April 14, 2026

If you care about reliable AI over federal open data, this is the moment to join. CloudRaven Labs is actively recruiting builders, researchers, user advocates, data stewards, product advisors, and reviewers for the 2026 TOP Sprint.

A sprint-style collaboration to prototype real, human-in-the-loop workflows that pull from authoritative Census sources and keep provenance front and center. Sprint work is active in spring 2026, and new collaborators can start by creating an account, then submit a join request inside the private workspace to begin the collaboration journey.

This overview introduces the TOP Sprint, the mission behind the work, and where new collaborators, user advocates, data stewards, and product advisors can make an immediate impact. If the program feels like the right fit, create your CloudRaven account and submit your join request to be considered for the sprint workspace.

1. Create your CloudRaven account.

2. Sign in to the private workspace.

3. Submit the TOP Sprint join request so the team can route you into the right collaboration area.

CloudRaven Labs

U.S. Census COIL

Current Focus

Launch trendsights.io with agentic, human-in-the-loop research use cases that demonstrate:

Quick links for MCP, Census APIs, and the sprint program

| Resource | Note |

|---|---|

| Open standard to connect tools and data to LLMs reliably. | |

| Official endpoints, metadata, and API user guide. | |

| Bring official Census Bureau statistics to AI assistants via MCP. | |

| Program details and sprint challenges from COIL. | |

| Project site and development documentation. |

Help with architecture, UX, prompt design, evaluation, or docs. We’ll plug you into a task area quickly.

New collaborators begin with account creation, then move into the join request inside the workspace so CloudRaven can coordinate lanes, onboarding, and first tasks.

A small, active team spanning program leadership, partner systems, research, and geospatial open-data work that turns hard-to-structure workflows into something teams can actually run.

Connect directly on LinkedIn and get a quick sense of where each person helps the sprint move.

Rob Schaper

Role

Program Lead

Co-Founder

CloudRaven Labs • Tech Team

What They Own

Rob leads the CloudRaven sprint lane across trustworthy agent workflows, evidence-first research systems, and the public story around the work. He helps hold together the strategy, operating rhythm, and translation from early problem framing into something teams can build, review, and trust.

Focus

Brian Schaper

Role

Principal Solutions Architect

Co-Founder

CloudRaven Labs • Partner Systems & Activation

What They Own

Brian supports CloudRaven's partner systems and activation work across outreach, onboarding, activation, reporting, and direct partner-facing execution. His role helps turn field requirements into practical architecture and cleaner operating support.

Focus

Chandrika Kaul

Role

Research Consultant

CloudRaven Labs • Geospatial & Open Data

What They Own

Chandrika strengthens the team's research and geospatial perspective across open-data analysis, map-aware interpretation, and evidence development. Her background spans Microsoft Bing Maps, Open Data Watch, search, reference data, and long-form research support.

Focus